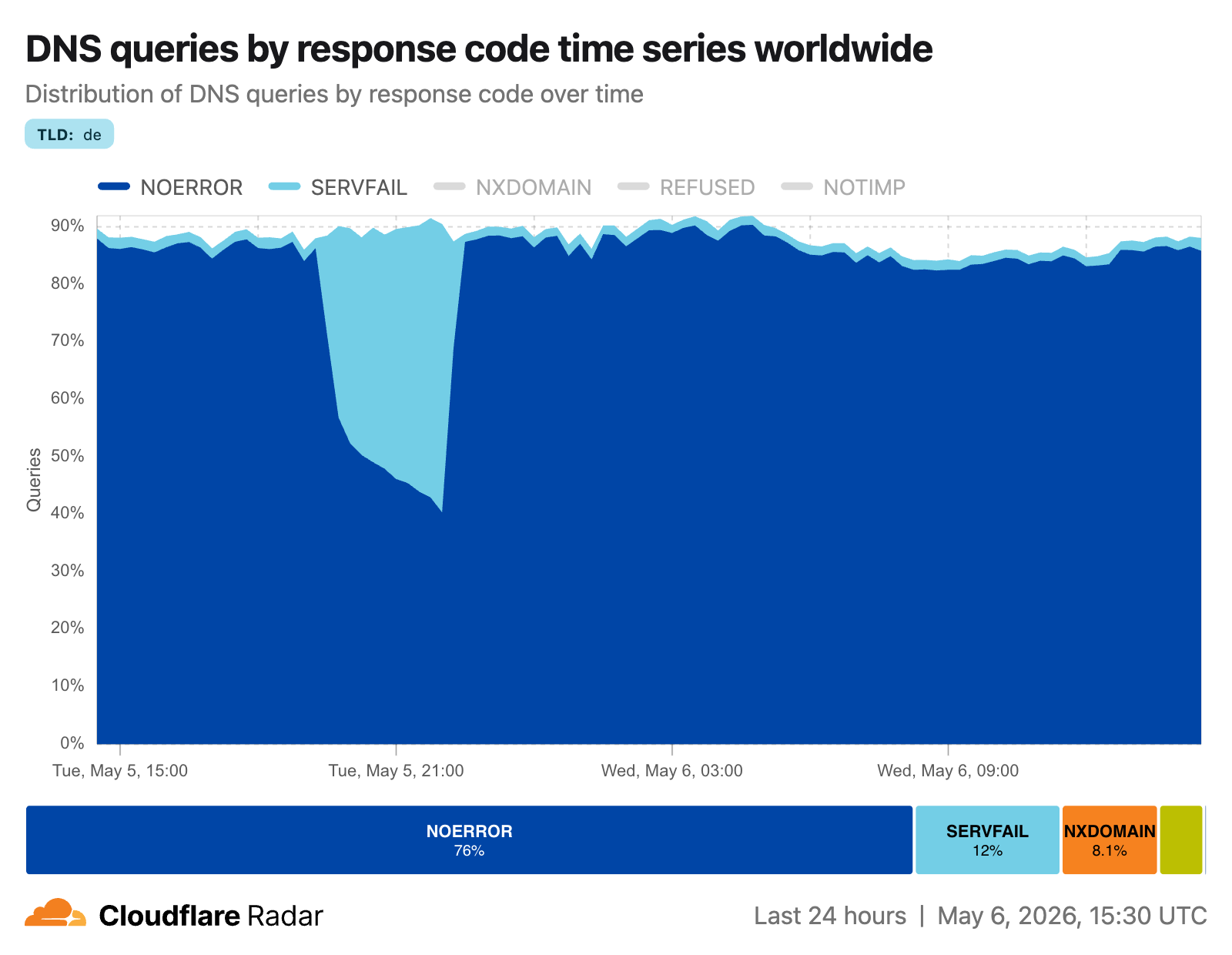

On May 5, 2026, at roughly 19:30 UTC, DENIC, the registry operator for the .de country-code top-level domain (TLD), started publishing incorrect DNSSEC signatures for the .de zone. Any validating DNS resolver receiving these signatures was required by the DNSSEC specification to reject them and return SERVFAIL to clients, including 1.1.1.1 , the public DNS resolver operated by Cloudflare. The country-code top-level domain for Germany, .de , is one of the largest on the Internet. On Cloudflare Radar , it consistently ranks among the most broadly queried TLDs globally. An outage at this level of the DNS hierarchy has the potential to make millions of domains unreachable. In this post, we’ll walk through what we saw, the impact of these events, and how we applied temporary mitigations while DENIC resolved the issue. How DNSSEC works DNSSEC (Domain Name System Security Extensions) adds cryptographic authentication to DNS. When a zone is signed with DNSSEC, each set of records is accompanied by a digital signature known as an RRSIG record that lets a resolver verify the records haven’t been tampered with. Unlike encrypted DNS protocols, such as DNS over TLS (DoT) and DNS over HTTPs (DoH), DNSSEC is about integrity, not privacy. The records are visible, but their authenticity can be proven. What makes DNSSEC unique is that the signatures travel together with the records they protect. This means integrity can be verified regardless of how many caches or hops a response has passed through. A cached record is just as verifiable as a fresh one. DNSSEC is built on a chain of trust. Starting at the root zone, whose trust anchor is hard-coded into the resolvers, each zone delegates trust to child zones via Delegation Signer (DS) records. A DS record in the parent zone contains a cryptographic hash of a public key in the child zone. When a resolver validates example.de it verifies the chain: root trusts .de , .de trusts example.de . A break anywhere in that chain causes validation to fail for everything below it, which is why a misconfiguration at a TLD like .de affects every domain under it. Zones typically use two types of keys: a Zone Signing Key (ZSK), used to sign the zone’s records, and a Key Signing Key (KSK), used to sign the ZSK itself. The KSK’s public key is what the parent zone’s DS record points to, anchoring the chain of trust. Rotating a ZSK is relatively straightforward: generate a new key, re-sign the zone’s records, and wait for caches to expire. Rotating a KSK is more involved, because the parent’s DS record must also be updated, often requiring coordination with a registrar or registry. During a key rotation, there is a critical window where the old key is being phased out and the new one phased in. If the signatures published in the zone are made with a key that resolvers cannot verify against the zone’s published DNSKEY records, whether because the signing step failed, the timing was wrong, or the new key wasn’t fully distributed yet, resolvers have no choice but to reject the responses and return SERVFAIL. What we saw On May 5, 2026, at roughly 19:30 UTC, DENIC, the operator for the .de TLD, started publishing incorrect DNSSEC signatures for the .de zone. Any validating resolver receiving these records was required by the DNSSEC specification to reject them and return SERVFAIL. 1.1.1.1 was no exception. The graph below shows the response codes 1.1.1.1 returned for .de queries during the incident. After the immediate spike in SERVFAILs at 19:30 UTC, it climbed steadily over the following three hours as cached records slowly started expiring. As each domain's cached records expired and resolvers went back to DENIC for fresh copies, they got back broken signatures and started failing. Also visible is a large increase in query volume. This is typical during DNS incidents, as clients retry failed queries, often three or more times, inflating the raw numbers. The SERVFAIL rate looks more alarming than the actual user impact, as many of those queries represent the same user retrying the same domain. What might be surprising is that the NOERROR rate stayed relatively stable throughout the incident. That's “serve stale” at work, which we'll cover in the next section. Serve stale Recursive resolvers cache the records they receive from authoritative nameservers for the duration of each record's TTL (Time-to-Live). While a record is cached, the resolver serves it directly without going back to the authoritative nameserver. When the TTL expires, the resolver fetches a fresh copy and re-caches it. During the outage, freshly requested records ended up resolving to SERVFAIL. The DNSSEC signatures were broken and the resolver correctly rejected them. But many .de records were still sitting in cache from before the incident began. Rather than immediately discarding those and returning SERVFAIL to users, 1.1.1.1 continued serving them past their TTL. This is called “serving stale.” 1.1.1.1 implements RFC 8767 , which formalizes this behavior. When upstream resolution fails, a resolver may continue serving expired cached records rather than returning an error. This significantly cushions the impact of an upstream outage, buying time for operators to respond. The result is visible in the graph below, which shows response codes for .de queries during the incident excluding the stale-served responses. Without stale-served responses, the NOERROR rate drops steadily from 19:30 onward. These represent queries that users received good answers for only because their record was still in cache. Our mitigation While the issue was largely out of our own control, and serve stale was doing its job, there was still a legitimate impact for a lot of users. Luckily, there were some actions we were able to take to improve the situation. Negative Trust Anchors RFC 7646 defines the concept of a Negative Trust Anchor (NTA). In normal DNSSEC operation, a validating resolver maintains a set of trust anchors: public keys a

Originally published at blog.cloudflare.com