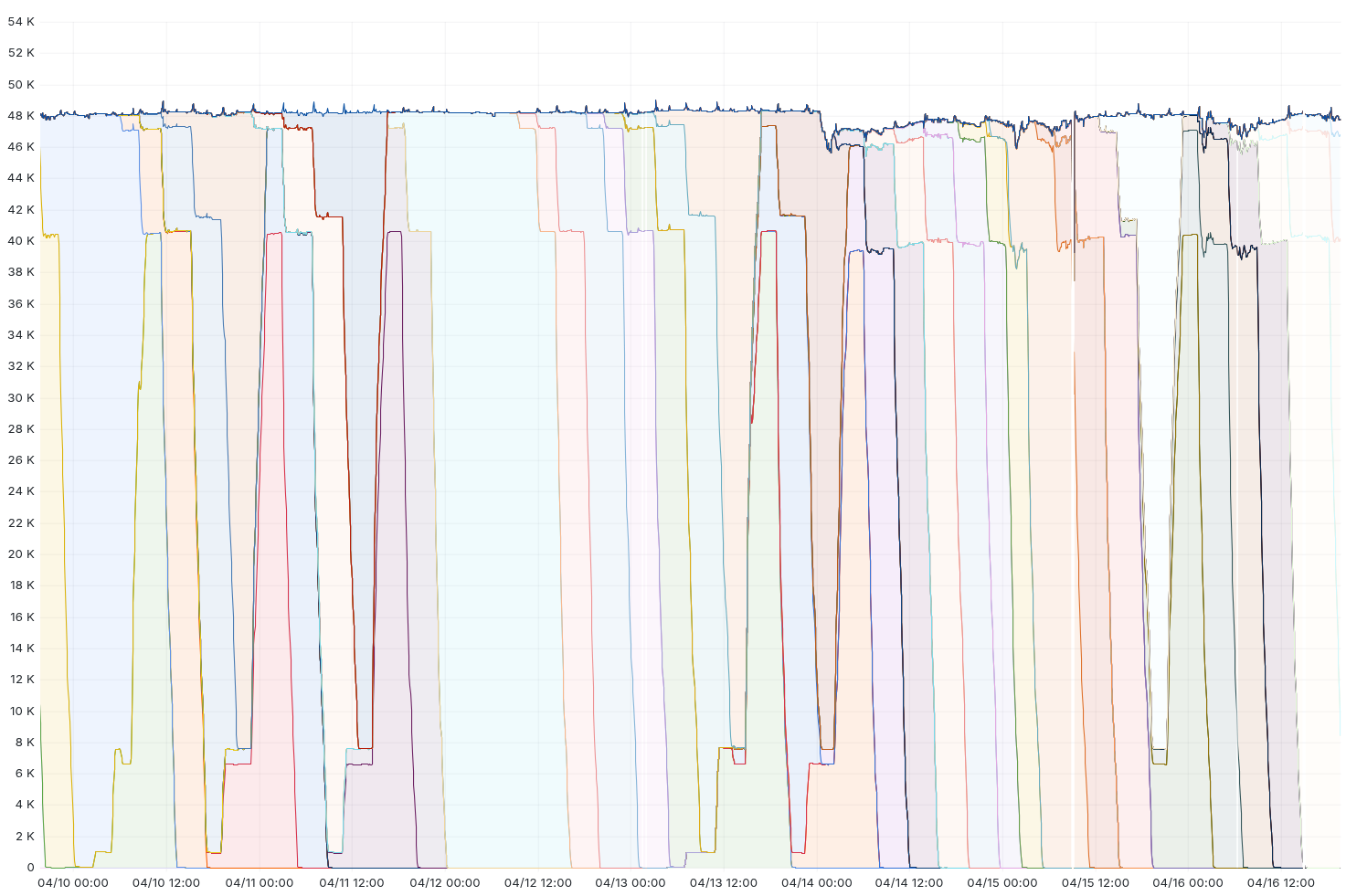

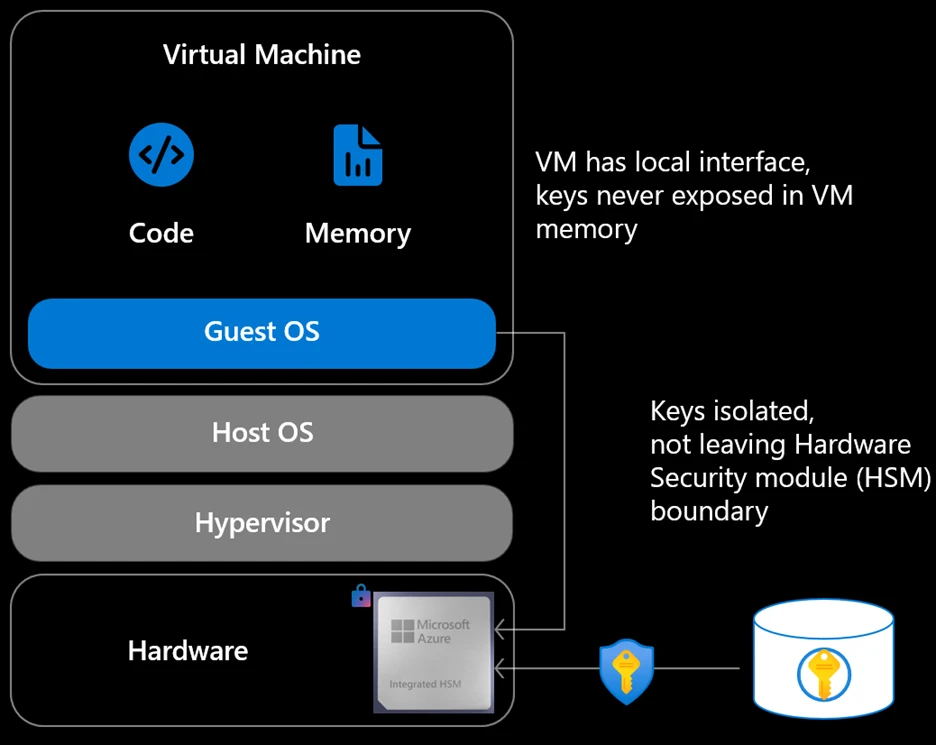

Over the past two and a bit quarters, we've undertaken an intensive engineering effort, internally code-named " Code Orange: Fail Small ", focused on making Cloudflare's infrastructure more resilient, secure, and reliable for every customer. Earlier this month, the Cloudflare team finished this work. While improving resiliency will never be a “job done” and will always be a top priority across our development lifecycle, we have now completed the work that would have avoided the November 18, 2025 and December 5, 2025 global outages. This work focused on several key areas: safer configuration changes, reducing the impact of failure, and revising our “break glass” procedures and incident management. We also introduced measures to prevent drift and regressions over time, and strengthened the way we communicate to our customers during an outage. Here we explain in depth what we shipped, and what it means for you. Safer configuration changes What it means for you : In most cases, Cloudflare internal configuration changes no longer reach our network instantly and are instead rolled out progressively with real-time health monitoring. This allows our observability tools to catch problems and revert issues before they affect your traffic. In order to catch potentially dangerous deployments before they reach production, we've identified high-risk configuration pipelines, and built new tools to manage configuration changes better. For products that run on our network processing customer traffic and receive configuration changes, we no longer deploy these changes instantly across the network. Instead, relevant teams have adopted a “health-mediated deployment” methodology, the same we use when releasing software , for all configuration deployments. This includes but is not limited to the product teams that were directly affected by the incidents. Central to this is a new internal component we call Snapstone, which we built to bring health-mediated deployment to configuration changes. Snapstone is a system that bundles configuration change into a package, and then allows gradual release of the configuration change with health mediation principles. Before Snapstone, applying this methodology to config was possible but difficult. It required significant per-team effort and wasn't consistently applied across the network. Snapstone closes this gap by providing a unified way to bring progressive rollout, real-time health monitoring, and automated rollback to configuration deployments by default. What makes Snapstone particularly powerful is its flexibility. Rather than being a fix for specific past failures, Snapstone allows teams to dynamically define any unit of configuration that needs health mediation, whether that's a data file like the one that caused the November 18 outage , or a control flag in our global configuration system like the one involved in the December 5 outage . Teams create these configuration units on demand, and Snapstone ensures they are deployed safely everywhere they're used. This gives us something we didn't have before: when a risk review or operational experience identifies a dangerous configuration pattern, the fix is straightforward -- bring it into Snapstone, and the configuration pattern immediately inherits safe deployment. Reducing the impact of failure What it means for you : In the event an issue is observed on our network, our systems now fail more gracefully. This vastly reduces the potential impact radius, to ensure your traffic is delivered even in worst-case scenarios. Product teams have carefully reviewed, both in a manual and programmatic fashion, their potential failure modes for products that are critical for serving customer traffic. Teams have removed non-essential runtime dependencies and implemented better failure modes. We will now use the last known good configuration where possible (“fail stale”), and if that isn’t possible we have reviewed each failure case and implemented “fail open” or “fail close” depending on whether serving traffic with reduced functionality is preferable to failing to serve traffic. Let’s look at an example of how this works. Our November 2025 outage was triggered by a failed rollout of our Bot Management detection machine learning classifier. Under our new procedures, if data were generated again that our system could not read, the system would refuse to use the updated configuration and instead use the old configuration. If the old configuration was not available for some reason, it would fail open to ensure customer production traffic continues to be served, which is a much better outcome than downtime. As a result, if the same Bot Management change that caused the failure in November were to roll out now, the system would detect the failure in an early stage of the deployment, before it had affected anything more than a small percentage of traffic. We have also begun further segmenting our system so that independent copies of services run for different cohorts of traffic. Cloudflare already takes advantage of these customer cohorts for blast radius mitigation with traffic management techniques today, and this additional process segmentation work provides a powerful reliability capability for us going forward. For example, the Workers runtime system is segmented into multiple independent services handling different cohorts of traffic, with one handling only traffic for our free customers. Changes are deployed to these segments based on customer cohorts, starting with free customers first. We’re also sending updates more quickly and frequently to the least critical segments, and at a slower pace to the most critical segments. As a result, if a change were deployed to the Workers runtime system and it broke traffic, it would now only affect a small percentage of our free customers before being automatically detected and rolled back. Sticking to the Workers runtime system as an example, in a seven-day period earlier this month, the

Originally published at blog.cloudflare.com